OpenStack(一)搭建部署

OpenStack大家已经不陌生了,很强大的开源的云计算管理平台项目。

配置文件解释请参考官网:https://docs.openstack.org/pike/configuration/

本篇博文是对openstack的mitaka版本搭建部署的记录。

一、搭建部署的前期准备

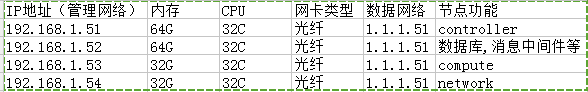

1.1 服务器的规划

1.2 配置管理网络和数据网络

管理网络就是机器第一块网卡的IP为192.168.1.0/24段,然后第二个光纤网卡为1.1.1.0/24段(不需要网关,因为2层数据通信)。

1.3 设置主机名并配置hosts

主机名一定要设置的,hosts里面设置的主机名也一定跟主机名是一致的。

#cat /etc/hosts|grep 192.168

192.168.1.51 controller

192.168.1.52 authentication

192.168.1.53 compute

192.168.1.54 network

1.4 防止自动更新

#yum update #(如果要用官网的yum源而非本地yum源就要将系统更新到最新,不然后面yum的时候可能会出现软件版本冲突的问题),我这里使用的是Centos7.3的操作系统。

#yum install yum-plugin-priorities -y

#防止自动更新,因为有一个组件更新了,就有可能导致其他的组件后者功能也跟着不能使用。不用追新,保持稳定就行。

1.5 同步各节点间的时间

#yum install chrony -y #这是一款时间同步软件,比ntpd同步更精准

控制节点操作(让控制节点做时间同步服务器,所有节点时间要保持一致):

#vi /etc/chrony.conf #更改下面一条就好了,只允许192.168.1.0段的主机进行时间同步

allow 192.168.1.0/24 bindcmdaddress 192.168.1.51

其他节点操作:

#vi /etc/chrony.conf #所有节点都将时间同步的服务器改为控制节点的IP地址

server 192.168.1.51 iburst #server 0.centos.pool.ntp.org iburst #server 1.centos.pool.ntp.org iburst #server 2.centos.pool.ntp.org iburst #server 3.centos.pool.ntp.org iburst

所有节点的操作:

#systemctl enable chronyd.service #添加开机启动

#systemctl start chronyd.service #开启chronyd服务

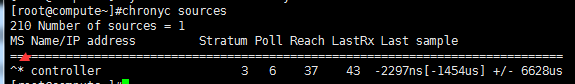

验证:

#chronyc sources #可能要花费几分钟去同步,一定要等时间完全同步。

#每个节点都查看一下看看时间是不是跟控制节点的时间同步了,箭头指向的地方^*就表示同步成功了。

1.6 配置yum源

一般我们在部署的时候应该是从本地yum源来进行安装的,因为本地yum源能保证组件版本依赖之间不会出现问题。

#这里呢,我们来用一下官网yum进行一下openstack的安装吧。可以开启缓存,将rpm包都包括下来制作成本地yum源。

#yum install centos-release-openstack-mitaka -y #使用官网yum源

# cat /etc/yum.repos.d/CentOS-OpenStack-mitaka.repo #查看一下yum源配置文件,自建yum源就没有这么麻烦了所有的包都丢一个目录里面就行了,如果用官网的话现在这个配置文件要稍微改一下

# CentOS-OpenStack-mitaka.repo # # Please see http://wiki.centos.org/SpecialInterestGroup/Cloud for more # information [centos-openstack-mitaka] name=CentOS-7 - OpenStack mitaka baseurl=http://mirror.centos.org/centos/7.3.1611/cloud/x86_64/openstack-mitaka/ #因为现在是centos7.4了,所以这里的版本要从7改为7.3.1611 gpgcheck=0 #key文件也用不了了,验证不了了。 enabled=1 [centos-openstack-mitaka-test] name=CentOS-7 - OpenStack mitaka Testing baseurl=http://buildlogs.centos.org/centos/7/cloud/$basearch/openstack-mitaka/ gpgcheck=0 enabled=0 [centos-openstack-mitaka-debuginfo] name=CentOS-7 - OpenStack mitaka - Debug baseurl=http://debuginfo.centos.org/centos/7/cloud/$basearch/ gpgcheck=1 enabled=0 gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-SIG-Cloud [centos-openstack-mitaka-source] name=CentOS-7 - OpenStack mitaka - Source baseurl=http://vault.centos.org/centos/7/cloud/Source/openstack-mitaka/ gpgcheck=1 enabled=0 gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-SIG-Cloud [rdo-trunk-mitaka-tested] name=OpenStack mitaka Trunk Tested baseurl=http://buildlogs.centos.org/centos/7/cloud/$basearch/rdo-trunk-mitaka-tested/ gpgcheck=0 enabled=0

#yum upgrade

控制节点的操作(所有节点也可以执行):

#yum install python-openstackclient -y #openstack的客户端工具,可以统一管理其他组件

#yum install openstack-selinux -y

博文来自:www.51niux.com

二、部署数据库和消息队列

2.1 部署mariadb数据库(授权节点192.168.1.52的操作)

#yum install mariadb mariadb-server python2-PyMySQL -y #Centos7系统默认是mariadb数据库了

# vi /etc/my.cnf.d/openstack.conf #简单编辑一下配置文件

[mysqld] bind-address = 192.168.1.52 #绑定自己的IP地址 default-storage-engine = innodb innodb_file_per_table max_connections = 40960 #因为我们服务器配置比较好就把连接数调大一点 collation-server = utf8_general_ci character-set-server = utf8

# systemctl enable mariadb.service

# systemctl start mariadb.service

#mysql_secure_installation #初始化数据库,下面只粘贴需要操作的地方

Set root password? [Y/n] y #这里选择y,可以为数据库创建密码,我这里密码设置为51niux.com New password: Re-enter new password: Password updated successfully! Reloading privilege tables.. ... Success! Remove anonymous users? [Y/n] y #删除匿名用户 ... Success! Disallow root login remotely? [Y/n] y #禁止root的空密码账户登录 ... Success! Remove test database and access to it? [Y/n] y #删除test数据库 - Dropping test database... ... Success! - Removing privileges on test database... ... Success! Reload privilege tables now? [Y/n] y ... Success! Cleaning up... All done! If you've completed all of the above steps, your MariaDB installation should now be secure. Thanks for using MariaDB

#经过登录测试可以看到我们创建的密码已经可以登录数据库了。

2.2 为Telemetry服务部署MongoDB(授权节点192.168.1.52上面的操作)

#OpenStack Telemetry项目是OpenStack big tent下负责计量统计的组件,目前Telemetry是包含了四个子项目,Ceilometer、 Aodh、Gnocchi和Panko。

#yum install mongodb-server mongodb -y #安装mongodb数据库服务

#vim /etc/mongod.conf #修改下面两句

bind_ip = 192.168.1.52 smallfiles = true

# systemctl enable mongod.service

# systemctl start mongod.service

2.3 部署消息队列rabbitmq(授权节点192.168.1.52上面的操作)

#yum install rabbitmq-server -y

# systemctl enable rabbitmq-server.service

# systemctl start rabbitmq-server.service

#rabbitmqctl add_user openstack 51niux #再rabbitmq里面创建一个用户openstack,密码为51niux

Creating user "openstack" ...

#rabbitmqctl set_permissions openstack ".*" ".*" ".*" #为这个新创建的用户赋予权限,第一个.*表示授予用户所有的配置权限,第二个是读的权限,第三个是写的权限

Setting permissions for user "openstack" in vhost "/" ...

2.4 部署memcached缓存为keystone服务缓存tokens(授权节点192.168.1.52上面的操作)

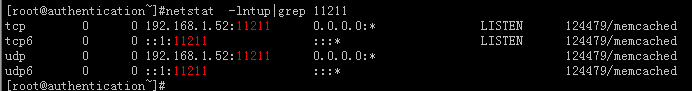

#yum install memcached python-memcached -y

#vim /etc/sysconfig/memcached #修改配置文件将监听IP从127.0.0.1改为我们的主机IP,这里不设置将导致最后登录的报错。

OPTIONS="-l 192.168.1.52" #改成这样这样就可以对外提供memcached服务了,尽量别OPTIONS="-l 192.168.1.52,::1" 要是做了禁用IPV6的设置此服务就启不起来了

# systemctl enable memcached.service

# systemctl start memcached.service

博文来自:www.51niux.com

三、部署keystone认证服务

3.1 授权节点(192.168.1.52)上面的数据库授权操作

建库建用户并授权:

#mysql -uroot -p MariaDB [(none)]> CREATE DATABASE keystone; #创建keystone数据库 MariaDB [(none)]> GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'192.168.1.%' IDENTIFIED BY '51niux'; #授权192.168.1.0/24都可以使用keystone用户51niux密码来访问keystone数据库 MariaDB [(none)]> flush privileges; #刷新下权限

3.2 keystone服务部署(控制节点192.168.1.51上面的操作)

安装httpdweb服务器

#yum install openstack-keystone httpd mod_wsgi -y

编辑keystone.conf配置

#openssl rand -hex 10 #创建一个密钥,下面的密钥马上就要用到了

e412000f353bfb82c7d5

#vim /etc/keystone/keystone.conf

[DEFAULT] admin_token = e412000f353bfb82c7d5 #谁有这个谁就是管理员 [database] #配置数据库的连接,keystone:51niux是数据库的用户名和密码,@authentication/keystone 是连接那个主机/数据库名称 connection = mysql+pymysql://keystone:51niux@authentication/keystone [token] provider = fernet #指定令牌生成的方式是fernet

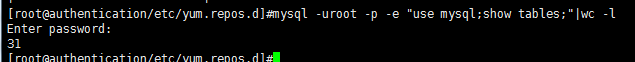

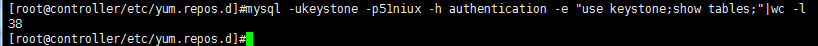

同步修改到数据库

#yum install mysql mysql-devel -y #如果没有安装要安装一下虽然不用启动

#su -s /bin/sh -c "keystone-manage db_sync" keystone

#上图为验证结果,远程登陆数据库发现数据库的表已经创建了。

初始化fernet keys

#keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone #初始化fernet keys,建一个keystone用户和组

配置apache服务

#vim /etc/httpd/conf/httpd.conf

ServerName controller

#vim /etc/httpd/conf.d/wsgi-keystone.conf #新创建一个配置文件

Listen 5000 #这两个端口代理的是/etc/keystone/keystone-paste.ini文件里面的设置

Listen 35357

<VirtualHost *:5000>

WSGIDaemonProcess keystone-public processes=5 threads=1 user=keystone group=keystone display-name=%{GROUP}

WSGIProcessGroup keystone-public

WSGIScriptAlias / /usr/bin/keystone-wsgi-public

WSGIApplicationGroup %{GLOBAL}

WSGIPassAuthorization On

ErrorLogFormat "%{cu}t %M"

ErrorLog /var/log/httpd/keystone-error.log

CustomLog /var/log/httpd/keystone-access.log combined

<Directory /usr/bin>

Require all granted

</Directory>

</VirtualHost>

<VirtualHost *:35357>

WSGIDaemonProcess keystone-admin processes=5 threads=1 user=keystone group=keystone display-name=%{GROUP}

WSGIProcessGroup keystone-admin

WSGIScriptAlias / /usr/bin/keystone-wsgi-admin

WSGIApplicationGroup %{GLOBAL}

WSGIPassAuthorization On

ErrorLogFormat "%{cu}t %M"

ErrorLog /var/log/httpd/keystone-error.log

CustomLog /var/log/httpd/keystone-access.log combined

<Directory /usr/bin>

Require all granted

</Directory>

</VirtualHost>启动web服务

# systemctl enable httpd.service

# systemctl start httpd.service

3.3 创建服务实体和访问端点(控制节点192.168.1.51上面的操作)

实现配置管理员环境变量,用于获取后面创建的权限

#export OS_TOKEN=e412000f353bfb82c7d5 #这个token是上面生成的哦

#export OS_URL=http://controller:35357/v3

#export OS_IDENTITY_API_VERSION=3

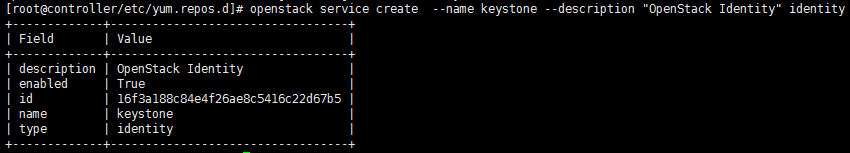

基于上一步给的权限,创建认证服务实体(目录服务)

# openstack service create --name keystone --description "OpenStack Identity" identity

#上图为执行成功后的结果

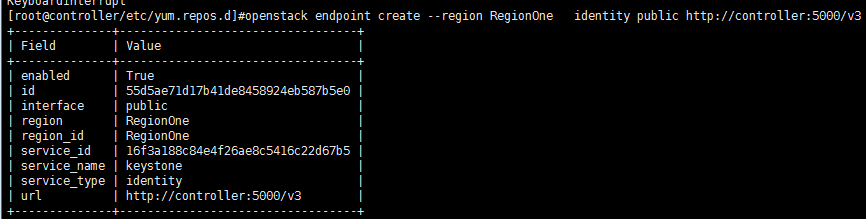

基于上一步建立的服务实体,创建访问该实体的三个api端点

#openstack endpoint create --region RegionOne identity public http://controller:5000/v3

#上图为执行成功后的结果

#openstack endpoint create --region RegionOne identity internal http://controller:5000/v3 #如果成功也会出现类似于上面的截图

#openstack endpoint create --region RegionOne identity admin http://controller:35357/v3 #同上

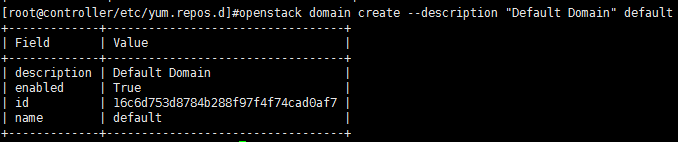

3.4 创建域、租户、用户、角色,把四个元素关联到一起(控制节点192.168.1.51上面的操作)

创建一个公共的域名

#openstack domain create --description "Default Domain" default

创建管理员:admin

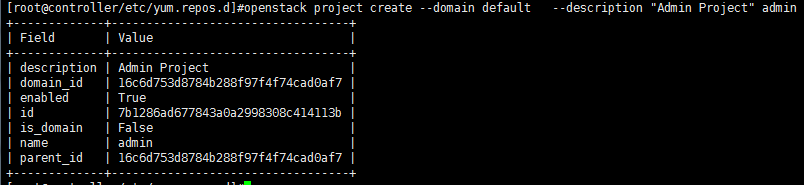

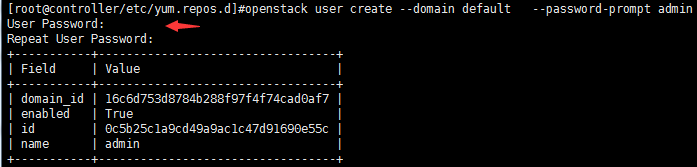

#openstack project create --domain default --description "Admin Project" admin #创建admin项目

#openstack user create --domain default --password-prompt admin #创建admin用户的密码,会提示你输入两次密码,这里51niux当密码吧

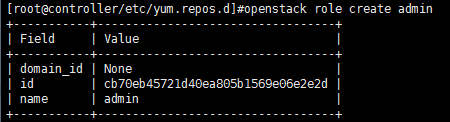

#openstack role create admin #创建admin角色

#openstack role add --project admin --user admin admin #将admin角色添加到admin项目和admin用户中

创建普通用户:demo

#openstack project create --domain default --description "Demo Project" demo #创建demo项目

#openstack user create --domain default --password-prompt demo #创建demo用户并给此用户设置密码,依旧51niux当密码

#openstack role create user #创建user角色

#openstack role add --project demo --user demo user #将user角色添加到demo项目和demo用户中

创建service项目:

#openstack project create --domain default --description "Service Project" service #创建service项目

#为后续的服务创建统一租户service,后面每搭建一个新的服务都需要在keystone中执行四种操作:1.建租户 2.建用户 3.建角色 4.做关联,后面所有的服务公用一个租户service,都是管理员角色admin,所以实际上后续的服务安装关于keysotne的操作只剩2,4步骤了。

3.5 验证操作(在控制节点192.168.1.51上面的操作)

#vim /etc/keystone/keystone-paste.ini

[pipeline:public_api] pipeline = cors sizelimit url_normalize request_id build_auth_context token_auth json_body ec2_extension public_service [pipeline:admin_api] pipeline = cors sizelimit url_normalize request_id build_auth_context token_auth json_body ec2_extension s3_extension admin_service [pipeline:api_v3] pipeline = cors sizelimit url_normalize request_id build_auth_context token_auth json_body ec2_extension_v3 s3_extension service_v3

#在[pipeline:public_api], [pipeline:admin_api], and [pipeline:api_v3] 三个地方移走:admin_token_auth ,以后别人拿着这个令牌来不搭理认为是一个假人。

#unset OS_TOKEN OS_URL #把刚才定义的环境变量卸载掉

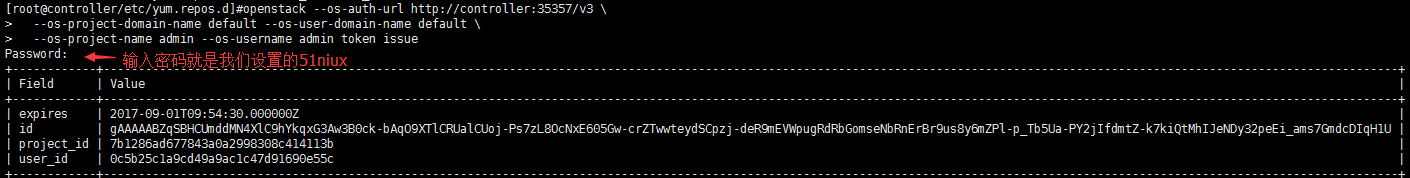

#openstack --os-auth-url http://controller:35357/v3 \

--os-project-domain-name default --os-user-domain-name default \

--os-project-name admin --os-username admin token issue

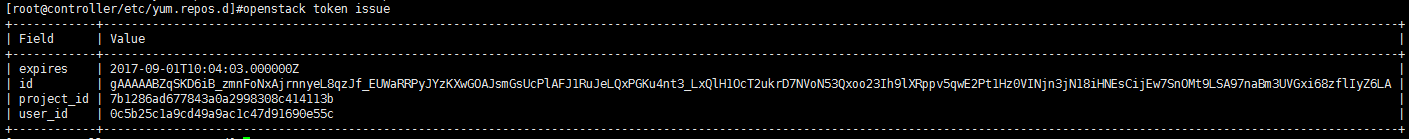

#上图表示验证成功,能正常的获取数据。

3.6 创建客户端脚本文件(管理节点192.168.1.51上面的操作)

上面验证过程也看到了,要输入一些变量,现在我们可以优化一下这个过程,将变量写到一个脚本中,要操作加载一下脚本文件就可以了。

# vim /root/admin-openrc #新建一个管理员的环境变量设置脚本

export OS_PROJECT_DOMAIN_NAME=default export OS_USER_DOMAIN_NAME=default export OS_PROJECT_NAME=admin export OS_USERNAME=admin export OS_PASSWORD=51niux export OS_AUTH_URL=http://controller:35357/v3 export OS_IDENTITY_API_VERSION=3 export OS_IMAGE_API_VERSION=2

# vim /root/demo-openrc #再创建一个普通用户的环境变量设置脚本

export OS_PROJECT_DOMAIN_NAME=default export OS_USER_DOMAIN_NAME=default export OS_PROJECT_NAME=demo export OS_USERNAME=demo export OS_PASSWORD=51niux export OS_AUTH_URL=http://controller:5000/v3 export OS_IDENTITY_API_VERSION=3 export OS_IMAGE_API_VERSION=2

下面是效果:

#source /root/admin-openrc #加载admin的环境变量

#openstack token issue

#测试结果显示是OK的,密码都不用输了,因为密码已经设置到脚本里面去了。

博文来自:www.51niux.com

四、搭建glance镜像服务

1.1 建库建用户(授权节点192.168.1.52上面的操作)

#mysql -uroot -p MariaDB [(none)]> CREATE DATABASE glance; #创建glance数据库 MariaDB [(none)]> GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' IDENTIFIED BY '51niux'; #给glance数据库授权,让都可以通过glance用户和密码51niux来访问。 MariaDB [(none)]> flush privileges;

1.2 keystone认证操作(控制节点192.168.1.51上面的操作)

创建一个glance用户并跟admin角色service项目进行关联:

上面提到过:所有后续项目的部署都统一放到一个租户service里,然后需要为每个项目建立用户,建管理员角色,建立关联

#source /root/admin-openrc #当然如果没有退出当前窗口就不用做这步操作

#openstack user create --domain default --password-prompt glance #创建glance用户,依旧给密码为51niux

#openstack role add --project service --user glance admin 将admin角色添加到service项目和glance用户中

建立服务实体:

# openstack service create --name glance --description "OpenStack Image" image #建立glance服务

建端点:

# openstack endpoint create --region RegionOne image public http://controller:9292

# openstack endpoint create --region RegionOne image internal http://controller:9292

# openstack endpoint create --region RegionOne image admin http://controller:9292

1.3 安装软件并配置(控制节点192.168.1.51上面的操作)

安装软件:

#yum install openstack-glance -y

修改glance-api.conf配置:

#vim /etc/glance/glance-api.conf

[database] connection = mysql+pymysql://glance:51niux@authentication/glance [keystone_authtoken] auth_url = http://controller:5000 memcached_servers = authentication:11211 auth_type = password project_domain_name = default user_domain_name = default project_name = service username = glance password = 51niux [paste_deploy] flavor = keystone [glance_store] stores = file,http default_store = file filesystem_store_datadir = /var/lib/glance/images/

#这里的数据库连接配置是用来初始化生成数据库表结构,不配置无法生成数据库表结构。glance-api不配置database对创建vm无影响,对使用metada有影响,日志报错:ERROR glance.api.v2.metadef_namespaces。

修改glance-registry.conf配置:

#vim /etc/glance/glance-registry.conf

[database] ##这里的数据库配置是用来glance-registry检索镜像元数据 connection = mysql+pymysql://glance:51niux@authentication/glance

新建目录:

#mkdir /var/lib/glance/images/

#chown glance. /var/lib/glance/images/

同步数据库:

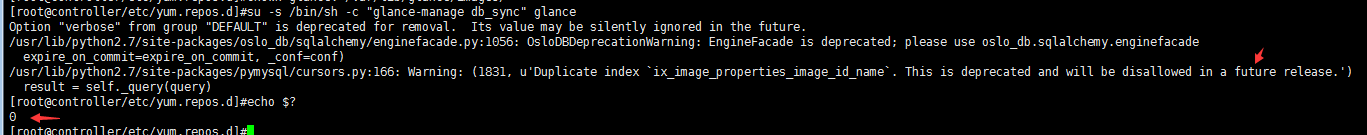

#su -s /bin/sh -c "glance-manage db_sync" glance #此处会报一些future的问题,请忽略,如下图

启动服务:

#systemctl enable openstack-glance-api.service openstack-glance-registry.service

#systemctl start openstack-glance-api.service openstack-glance-registry.service

验证操作:

#source /root/admin-openrc

#wget download.cirros-cloud.net/0.3.4/cirros-0.3.4-x86_64-disk.img #下载测试系统镜像到本地

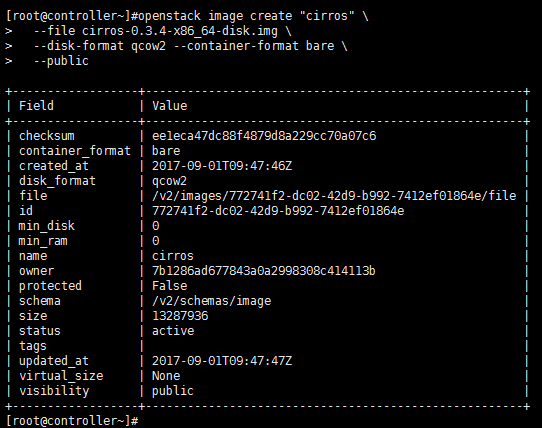

#openstack image create "cirros" \ --file cirros-0.3.4-x86_64-disk.img \ --disk-format qcow2 --container-format bare \ --public

#上面的命令是创建一个qcow2格式的名称叫做cirros,镜像为cirros-0.3.4-x86_64-disk.img,图片的容器格式为bare,共享此镜像,共享后其他用户也可以使用此镜像启动instance。

#上图为执行创建操作之后的打印输出。

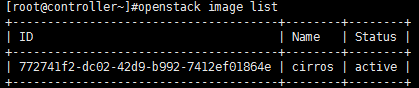

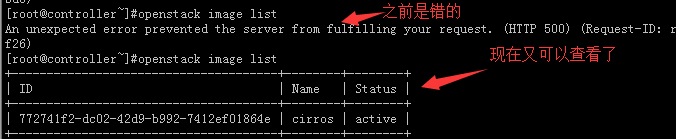

#openstack image list #查看下镜像列表

#从结果中可以看出有一个叫做cirros的镜像状态已经是active存活状态了。

博文来自:www.51niux.com

五、部署计算节点

5.1 建库建用户(授权节点192.168.1.52上面的操作)

#mysql -uroot -p MariaDB [(none)]> CREATE DATABASE nova_api; MariaDB [(none)]> CREATE DATABASE nova; MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' IDENTIFIED BY '51niux'; MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY '51niux'; MariaDB [(none)]> flush privileges;

5.2 keystone相关操作(控制节点192.168.1.51上面的操作)

#source /root/admin-openrc

#openstack user create --domain default --password-prompt nova #依旧给个51niux的密码

#openstack role add --project service --user nova admin

#openstack service create --name nova --description "OpenStack Compute" compute

#openstack endpoint create --region RegionOne compute public http://controller:8774/v2.1/%\(tenant_id\)s

#openstack endpoint create --region RegionOne compute internal http://controller:8774/v2.1/%\(tenant_id\)s

#openstack endpoint create --region RegionOne compute admin http://controller:8774/v2.1/%\(tenant_id\)s

5.3 控制节点(192.168.1.51)上面安装相关软件包并进行配置

安装软件包:

#yum install openstack-nova-api openstack-nova-conductor \ openstack-nova-console openstack-nova-novncproxy \ openstack-nova-scheduler -y

修改配置:

#vim /etc/nova/nova.conf

[DEFAULT] enabled_apis=osapi_compute,metadata rpc_backend = rabbit auth_strategy = keystone my_ip = 192.168.1.51 use_neutron = True firewall_driver = nova.virt.firewall.NoopFirewallDriver [api_database] connection = mysql+pymysql://nova:51niux@authentication/nova_api [database] connection = mysql+pymysql://nova:51niux@authentication/nova [oslo_messaging_rabbit] rabbit_host = authentication rabbit_userid = openstack rabbit_password = 51niux [keystone_authtoken] auth_url = http://controller:5000 memcached_servers = authentication:11211 auth_type = password project_domain_name = default user_domain_name = default project_name = service username = nova password = 51niux [vnc] #创建主机之后,要vnc代理管理主机 vncserver_listen = 192.168.1.51 vncserver_proxyclient_address = 192.168.1.51 [oslo_concurrency] lock_path = /var/lib/nova/tmp

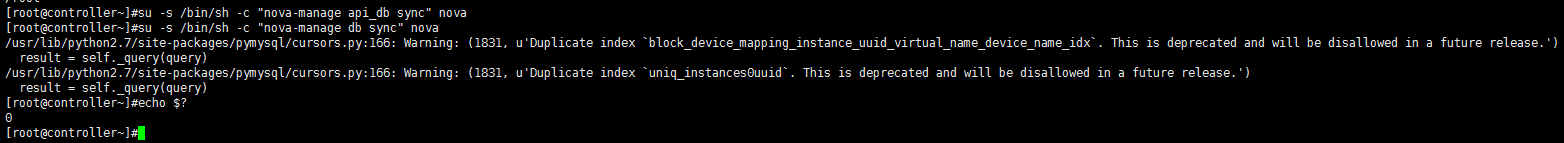

同步数据库:(此处会报一些future的问题,自行忽略)

#su -s /bin/sh -c "nova-manage api_db sync" nova

#su -s /bin/sh -c "nova-manage db sync" nova

启动服务:

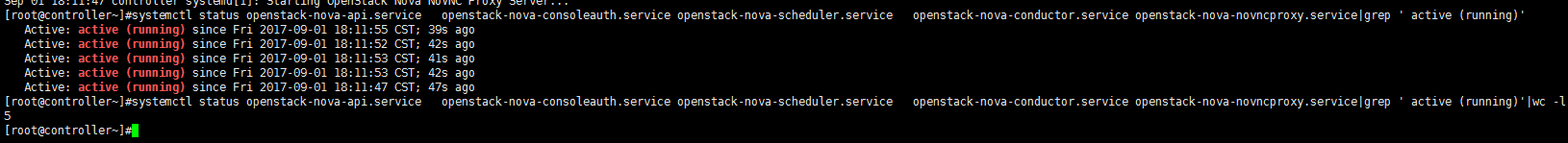

systemctl enable openstack-nova-api.service \ openstack-nova-consoleauth.service openstack-nova-scheduler.service \ openstack-nova-conductor.service openstack-nova-novncproxy.service systemctl start openstack-nova-api.service \ openstack-nova-consoleauth.service openstack-nova-scheduler.service \ openstack-nova-conductor.service openstack-nova-novncproxy.service

#systemctl status openstack-nova-api.service openstack-nova-consoleauth.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service|grep ' active (running)'

#可以验证一下是不是五个服务都启动了

5.4 计算节点的配置(192.168.1.53的配置)

安装软件包:

#yum install openstack-nova-compute libvirt-daemon-lxc -y

修改配置:

#vim /etc/nova/nova.conf

[DEFAULT] rpc_backend = rabbit auth_strategy = keystone my_ip = 192.168.1.53 use_neutron = True firewall_driver = nova.virt.firewall.NoopFirewallDriver [oslo_messaging_rabbit] rabbit_host = authentication rabbit_userid = openstack rabbit_password = 51niux [vnc] enabled = True vncserver_listen = 0.0.0.0 vncserver_proxyclient_address = 192.168.1.53 novncproxy_base_url = http://192.168.1.51:6080/vnc_auto.html [glance] api_servers = http://controller:9292 [oslo_concurrency] lock_path = /var/lib/nova/tmp

查看机器是否支持虚拟化:

#egrep -c '(vmx|svm)' /proc/cpuinfo #不为0说明我这台机器支持虚拟化

32

#egrep -c '(vmx|svm)' /proc/cpuinfo 如果结果为0则编辑/etc/nova/nova.conf #vim /etc/nova/nova.conf [libvirt] virt_type = qemu

启动服务:

#systemctl enable libvirtd.service openstack-nova-compute.service

#systemctl start libvirtd.service openstack-nova-compute.service

#如果启动有问题请查看:/var/log/目录下面与服务名称为目录下面对应的日志文件

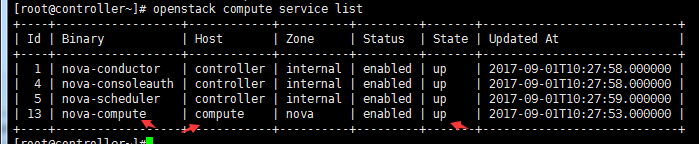

5.5 控制节点上面验证一下

#source /root/admin-openrc

# openstack compute service list

#从结果上面看计算节点已经添加进来并且是up状态。

六、网络节点的部署

6.1 建库建用户(授权节点192.168.1.52上面的操作)

#mysql -uroot -p MariaDB [(none)]> CREATE DATABASE neutron; MariaDB [(none)]> GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' IDENTIFIED BY '51niux'; MariaDB [(none)]> flush privileges;

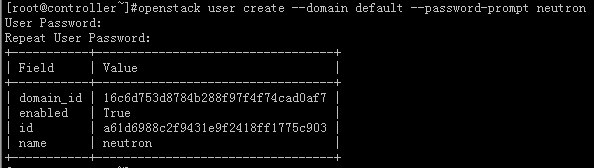

6.2 keystone相关操作(控制节点192.168.1.51上面的操作)

#source /root/admin-openrc

创建“neutron”用户:

#openstack user create --domain default --password-prompt neutron #创建一个neutron用户,密码依旧为51niux

添加“admin”角色到“neutron”用户:

#openstack role add --project service --user neutron admin

创建"neutron"服务实体:

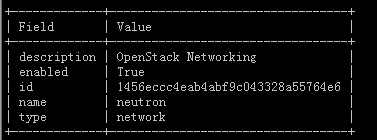

#openstack service create --name neutron --description "OpenStack Networking" network

创建网络服务API端点:

#openstack endpoint create --region RegionOne network public http://controller:9696

#openstack endpoint create --region RegionOne network internal http://controller:9696

#openstack endpoint create --region RegionOne network admin http://controller:9696

6.3 控制节点上面neutron的操作(控制节点192.168.1.51上面的操作)

安装软件:

#yum install openstack-neutron openstack-neutron-ml2 python-neutronclient which -y

配置服务器组件:

修改neutron.conf配置文件:

#vim /etc/neutron/neutron.conf

[DEFAULT] core_plugin = ml2 #指定neutron的插件为ml2(Modular Layer 2) service_plugins = router #指定路由插件开启路由服务 allow_overlapping_ips = True #启用重叠的IP地址 rpc_backend = rabbit #使用rabbitMQ作为中间件 auth_strategy = keystone #使用keystone认证 notify_nova_on_port_status_changes = True #可以直接给neutron发请求创建网络 notify_nova_on_port_data_changes = True [oslo_messaging_rabbit] #配置RabbitMQ消息队列的连接 rabbit_host = authentication rabbit_userid = openstack rabbit_password = 51niux [database] #配置数据库的访问 connection = mysql+pymysql://neutron:51niux@authentication/neutron [keystone_authtoken] #配置认证服务访问 auth_url = http://controller:5000 memcached_servers = authentication:11211 auth_type = password project_domain_name = default user_domain_name = default project_name = service username = neutron password = 51niux [nova] #配置网络服务来通知计算节点的网络拓扑变化,也就是改变网络拓扑的时候使用。 auth_url = http://controller:5000 auth_type = password project_domain_name = default user_domain_name = default region_name = RegionOne project_name = service username = nova password = 51niux [oslo_concurrency] #配置锁路径 lock_path = /var/lib/neutron/tmp

修改ml2配置文件

#vim /etc/neutron/plugins/ml2/ml2_conf.ini

[ml2] #配置用下面几种形式来创建网络类型 type_drivers = flat,vlan,vxlan,gre tenant_network_types = vxlan #租户的网络类型,启用VXLAN私有网络 mechanism_drivers = openvswitch,l2population #网络机制插件,前面实现虚拟交换机,后面帮你优化网络例如学习机制 extension_drivers = port_security #启用端口安全扩展驱动 [ml2_type_flat] #配置公共虚拟网络为flat网络 flat_networks = provider #这种网络结构全都跟物理结构相关联,租户不能自建私有网络 [ml2_type_vxlan] vni_ranges = 1:1000 #为私有网络配置VXLAN网络识别范围 [securitygroup] enable_ipset = True #开启ipset增加安全组规则的高效性

修改nova.conf配置文件:

#vim /etc/nova/nova.conf

[neutron] #用来验证neutron url = http://controller:9696 auth_url = http://controller:5000 auth_type = password project_domain_name = default user_domain_name = default region_name = RegionOne project_name = service username = neutron password = 51niux service_metadata_proxy = True metadata_proxy_shared_secret = 51niux

创建软连接:

#ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

#网络服务初始化脚本需要一个超链接 /etc/neutron/plugin.ini指向ML2插件配置文件/etc/neutron/plugins/ml2/ml2_conf.ini

同步数据库:(此处会报一些关于future的问题,自行忽略)

#su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf \ --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron

重启nova服务:

#systemctl restart openstack-nova-api.service

启动neutron服务:

# systemctl enable neutron-server.service

# systemctl start neutron-server.service

6.4 网络节点的配置

二层通信的1.1.1.54已经设置好了,如果没设置请将服务器的2网卡设置成1.1.1.54或者你规划的网络,二层通讯不需要网关。

修改内核参数

#vim /etc/sysctl.conf

net.ipv4.ip_forward=1 net.ipv4.conf.all.rp_filter=0 net.ipv4.conf.default.rp_filter=0

#sysctl -p

安装软件包

#yum install openstack-neutron openstack-neutron-ml2 openstack-neutron-openvswitch -y

配置组件

#vim /etc/neutron/neutron.conf

[DEFAULT] core_plugin = ml2 service_plugins = router allow_overlapping_ips = True rpc_backend = rabbit auth_strategy = keystone [oslo_messaging_rabbit] rabbit_host = authentication rabbit_userid = openstack rabbit_password = 51niux [oslo_concurrency] lock_path = /var/lib/neutron/tmp

配置openvswitch_agent

#vim /etc/neutron/plugins/ml2/openvswitch_agent.ini

[ovs] local_ip=1.1.1.54 #指定数据网络的IP bridge_mappings=external:br-ex #指定网桥 [agent] tunnel_types=gre,vxlan #隧道类型 l2_population=True #启用优化网络组件 prevent_arp_spoofing=True #组织arp欺骗

配置L3代理

#vim /etc/neutron/l3_agent.ini #开启三层转发功能

[DEFAULT] interface_driver=neutron.agent.linux.interface.OVSInterfaceDriver external_network_bridge=br-ex

配置DHCP代理

#vim /etc/neutron/dhcp_agent.ini

[DEFAULT] interface_driver=neutron.agent.linux.interface.OVSInterfaceDriver dhcp_driver=neutron.agent.linux.dhcp.Dnsmasq enable_isolated_metadata=True

配置元数据代理

#vim /etc/neutron/metadata_agent.ini

[DEFAULT] nova_metadata_ip = controller metadata_proxy_shared_secret= 51niux

启动服务

#systemctl enable neutron-openvswitch-agent.service neutron-l3-agent.service \ neutron-dhcp-agent.service neutron-metadata-agent.service #systemctl start neutron-openvswitch-agent.service neutron-l3-agent.service \ neutron-dhcp-agent.service neutron-metadata-agent.service

建网桥

# ovs-vsctl add-br br-ex

# cat /etc/sysconfig/network-scripts/ifcfg-eth0 #修改ifcfg-eth0网卡主要是将IP什么的去掉,按理是再搞一块网卡,咱们这里测试方便就把管理网卡分配这个网桥使用的。

TYPE=Ethernet BOOTPROTO=none DEFROUTE=yes PEERDNS=yes PEERROUTES=yes IPV4_FAILURE_FATAL=no IPV6INIT=yes IPV6_AUTOCONF=yes IPV6_DEFROUTE=yes IPV6_PEERDNS=yes IPV6_PEERROUTES=yes IPV6_FAILURE_FATAL=no NAME=eth0 DEVICE=eth0 ONBOOT=yes

# cat /etc/sysconfig/network-scripts/ifcfg-br-ex #建立网桥的配置文件

DEVICE=br-ex TYPE=Ethernet ONBOOT="yes" BOOTPROTO="none" IPADDR=192.168.1.54 GATEWAY=192.168.1.254 NETMASK=255.255.255.0 DNS1=114.114.114.114 DNS1=223.5.5.5 NM_CONTROLLED=no #这里要配置上

#service NetworkManager stop #这个服务要关闭,不然会导致网卡重启失败

#systemctl disable NetworkManager

#systemctl restart network && ovs-vsctl add-port br-ex eth0 & #两条命令要写一起不然直接就网断了就没有后面添加网卡什么事情了

# ovs-vsctl show #看一个br的具体信息

1d15e80b-c269-473e-858c-12de577856e9 Bridge br-ex Port br-ex Interface br-ex type: internal Port "eth0" Interface "eth0" ovs_version: "2.6.1"

6.5 计算节点的配置(192.168.1.53上面的配置)

修改内核参数:

#vim /etc/sysctl.conf

net.ipv4.conf.all.rp_filter=0 net.ipv4.conf.default.rp_filter=0 net.bridge.bridge-nf-call-iptables=1 net.bridge.bridge-nf-call-ip6tables=1

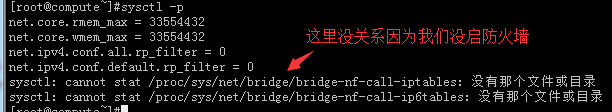

#sysctl -p

数据IP:1.1.1.53 如果没配置要记得配置一下哦。

安装软件包:

#yum install openstack-neutron openstack-neutron-ml2 openstack-neutron-openvswitch -y

编辑neutron配置:

#vim /etc/neutron/neutron.conf

[DEFAULT] rpc_backend = rabbit auth_strategy = keystone [oslo_messaging_rabbit] rabbit_host = authentication rabbit_userid = openstack rabbit_password = 51niux [oslo_concurrency] lock_path = /var/lib/neutron/tmp

编辑openvswitch_agent:

#vim /etc/neutron/plugins/ml2/openvswitch_agent.ini

[ovs] local_ip = 1.1.1.53 #计算节点的数据网络的IP [agent] tunnel_types = gre,vxlan l2_population = True prevent_arp_spoofing = True [securitygroup] firewall_driver = neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver enable_security_group = True

编辑nova配置:

#vim /etc/nova/nova.conf

[neutron] url = http://controller:9696 auth_url = http://controller:5000 auth_type = password project_domain_name = default user_domain_name = default region_name = RegionOne project_name = service username = neutron password = 51niux

启动服务:

# systemctl enable neutron-openvswitch-agent.service

# systemctl start neutron-openvswitch-agent.service

# systemctl restart openstack-nova-compute.service

博文来自:www.51niux.com

七、部署控制面板dashboard(控制节点192.168.1.51上的操作)

安装软件:

#yum install openstack-dashboard -y

配置:

#vim /etc/openstack-dashboard/local_settings

OPENSTACK_HOST = "controller"

ALLOWED_HOSTS = ['*', ]

SESSION_ENGINE = 'django.contrib.sessions.backends.cache' #注意这里可能要添加

CACHES = {

'default': {

'BACKEND': 'django.core.cache.backends.memcached.MemcachedCache',

'LOCATION': 'authentication:11211',

}

}

OPENSTACK_KEYSTONE_URL = "http://%s:5000/v3" % OPENSTACK_HOST

OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = True

OPENSTACK_API_VERSIONS = {

"identity": 3,

"image": 2,

"volume": 2,

}

OPENSTACK_KEYSTONE_DEFAULT_DOMAIN = "default"

OPENSTACK_KEYSTONE_DEFAULT_ROLE = "user"

TIME_ZONE = "UTC"启动服务(控制节点192.168.1.51的操作)

#systemctl enable httpd.service

#systemctl restart httpd.service

启动服务(授权节点192.168.1.52的操作)

#systemctl restart memcached.service

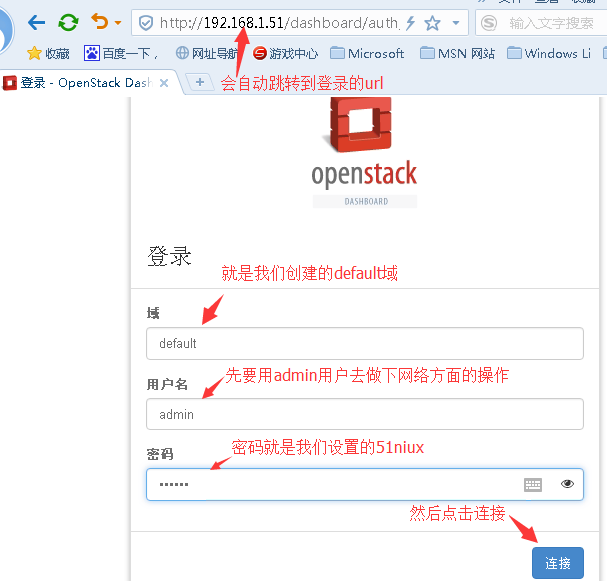

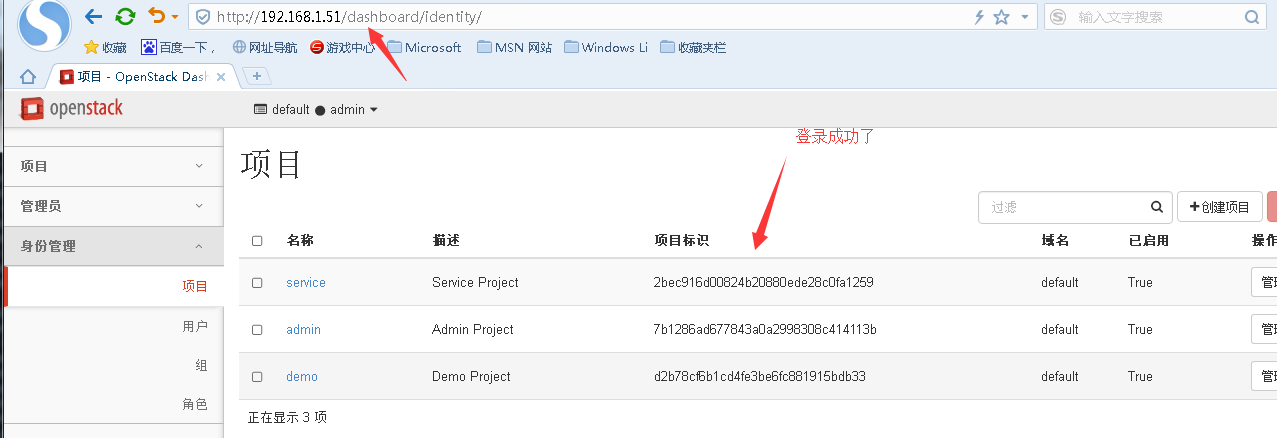

八、验证

http://192.168.1.51/dashboard #访问这个URL来测试一下。

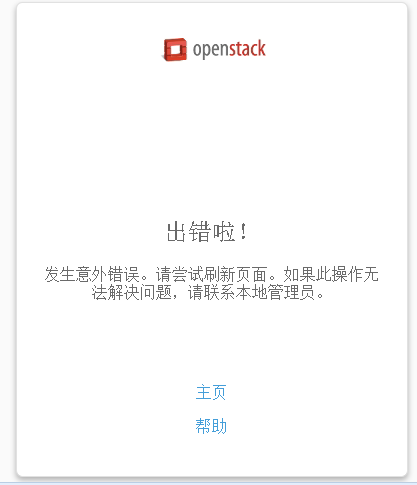

#然后可能就蛋疼了:

#然后查看后台日志看看咋回事啊:

#tail -f /var/log/httpd/error_log

[Sat Sep 02 16:40:21.593314 2017] [:error] [pid 90790] Login successful for user "admin". #提示admin登录成功了 [Sat Sep 02 16:40:26.384586 2017] [:error] [pid 90790] Internal Server Error: /dashboard/auth/login/ #但是后台报错了 [Sat Sep 02 16:40:26.384678 2017] [:error] [pid 90790] Traceback (most recent call last): [Sat Sep 02 16:40:26.384686 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/core/handlers/base.py", line 132, in get_response [Sat Sep 02 16:40:26.384691 2017] [:error] [pid 90790] response = wrapped_callback(request, *callback_args, **callback_kwargs) [Sat Sep 02 16:40:26.384725 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/views/decorators/debug.py", line 76, in sensitive_post_parameters_wrapper [Sat Sep 02 16:40:26.384737 2017] [:error] [pid 90790] return view(request, *args, **kwargs) [Sat Sep 02 16:40:26.384783 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/utils/decorators.py", line 110, in _wrapped_view [Sat Sep 02 16:40:26.384787 2017] [:error] [pid 90790] response = view_func(request, *args, **kwargs) [Sat Sep 02 16:40:26.384809 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/views/decorators/cache.py", line 57, in _wrapped_view_func [Sat Sep 02 16:40:26.384818 2017] [:error] [pid 90790] response = view_func(request, *args, **kwargs) [Sat Sep 02 16:40:26.384822 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/openstack_auth/views.py", line 103, in login [Sat Sep 02 16:40:26.384838 2017] [:error] [pid 90790] **kwargs) [Sat Sep 02 16:40:26.384844 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/views/decorators/debug.py", line 76, in sensitive_post_parameters_wrapper [Sat Sep 02 16:40:26.384848 2017] [:error] [pid 90790] return view(request, *args, **kwargs) [Sat Sep 02 16:40:26.384852 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/utils/decorators.py", line 110, in _wrapped_view [Sat Sep 02 16:40:26.384861 2017] [:error] [pid 90790] response = view_func(request, *args, **kwargs) [Sat Sep 02 16:40:26.384887 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/views/decorators/cache.py", line 57, in _wrapped_view_func [Sat Sep 02 16:40:26.384891 2017] [:error] [pid 90790] response = view_func(request, *args, **kwargs) [Sat Sep 02 16:40:26.384894 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/contrib/auth/views.py", line 51, in login [Sat Sep 02 16:40:26.384899 2017] [:error] [pid 90790] auth_login(request, form.get_user()) [Sat Sep 02 16:40:26.384904 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/contrib/auth/__init__.py", line 110, in login [Sat Sep 02 16:40:26.384911 2017] [:error] [pid 90790] request.session.cycle_key() [Sat Sep 02 16:40:26.384917 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/contrib/sessions/backends/base.py", line 285, in cycle_key [Sat Sep 02 16:40:26.384926 2017] [:error] [pid 90790] self.create() [Sat Sep 02 16:40:26.384949 2017] [:error] [pid 90790] File "/usr/lib/python2.7/site-packages/django/contrib/sessions/backends/cache.py", line 48, in create [Sat Sep 02 16:40:26.384953 2017] [:error] [pid 90790] "Unable to create a new session key. " [Sat Sep 02 16:40:26.384982 2017] [:error] [pid 90790] RuntimeError: Unable to create a new session key. It is likely that the cache is unavailable. #不能创建一个新的session key

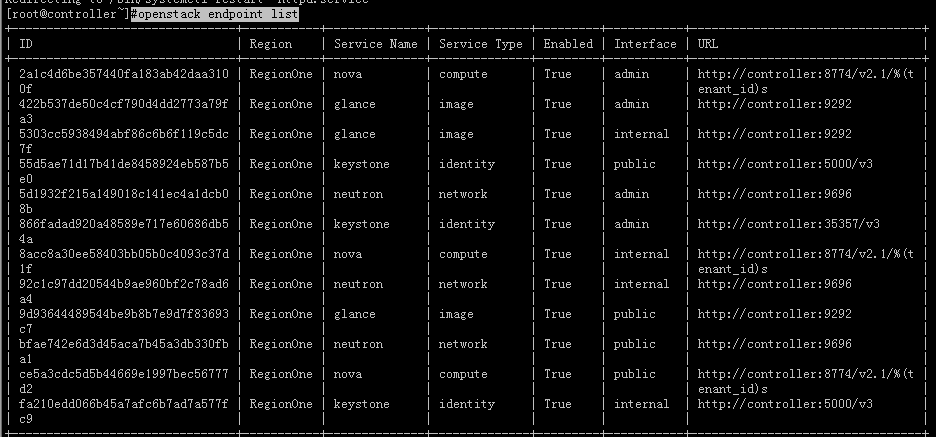

#openstack endpoint list #查看keystone endpoint,或者查看详细的信息,在后面加上--long

#openstack-status #查看openstack的状态

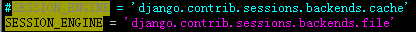

然后解决办法(这不是最终的解决方案,请看到最后):

因为它周期性连接到非本地缓存有问题。官网写的有bug。

把/etc/openstack-dashboard/local_settings 中 SESSION_ENGINE = 'django.contrib.sessions.backends.cache' 应改为 SESSION_ENGINE = 'django.contrib.sessions.backends.file'

然后重启httpd服务和memcached服务。

#但是还是不对,为啥呢,因为不使用缓存貌似不对,还是觉得那里有问题,然后继续看日志

#tail -f /var/log/httpd/error_log

[Sun Sep 03 00:51:41.756215 2017] [core:notice] [pid 91278] AH00094: Command line: '/usr/sbin/httpd -D FOREGROUND' [Sat Sep 02 16:51:44.954369 2017] [:error] [pid 91280] Could not process panel theme_preview: Dashboard with slug "developer" is not registered. [Sat Sep 02 16:52:33.564550 2017] [:error] [pid 91280] Login successful for user "admin".

#那还得用原来的SESSION_ENGINE = 'django.contrib.sessions.backends.cache' ,提示一下默认的memcache是监听在127.0.0.1的11211,查看一下自己/etc/openstack-dashboard/local_settings里面CACHES = { #里面定义的memcache的IP和端口是否是正确的。问题是出在这里的。然后memcached调整好了,配置文件还是用SESSION_ENGINE = 'django.contrib.sessions.backends.cache' ,重启httpd服务再次登录。

九、小小的收个尾:

这时候页面能登录了,并不是代表就没问题了,只是单纯的页面可以登录了而已,毕竟openstack的组件还是比较复杂的,有一个地方疏忽了可能就会报错但是你不去看日志的话,可能要等操作的时候实现不了功能再去排查了。所以应该把日志扫一遍。

9.1 如我拿网络节点举个例子:

# tail -f /var/log/neutron/*.log|grep ERR #搜搜有什么错误啊

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent [-] Unable to enable dhcp for ea100065-c97c-45ae-83fd-a2ffb1c815fd.

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent Traceback (most recent call last):

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/neutron/agent/dhcp/agent.py", line 112, in call_driver

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent getattr(driver, action)(**action_kwargs)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/neutron/agent/linux/dhcp.py", line 208, in enable

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent interface_name = self.device_manager.setup(self.network)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/neutron/agent/linux/dhcp.py", line 1234, in setup

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent namespace=network.namespace):

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/neutron/agent/linux/ip_lib.py", line 1070, in ensure_device_is_ready

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent dev.link.set_up()

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/neutron/agent/linux/ip_lib.py", line 527, in set_up

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent return self._as_root([], ('set', self.name, 'up'))

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/neutron/agent/linux/ip_lib.py", line 384, in _as_root

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent use_root_namespace=use_root_namespace)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/neutron/agent/linux/ip_lib.py", line 96, in _as_root

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent log_fail_as_error=self.log_fail_as_error)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/neutron/agent/linux/ip_lib.py", line 105, in _execute

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent log_fail_as_error=log_fail_as_error)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/neutron/agent/linux/utils.py", line 122, in execute

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent execute_rootwrap_daemon(cmd, process_input, addl_env))

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/neutron/agent/linux/utils.py", line 108, in execute_rootwrap_daemon

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent return client.execute(cmd, process_input)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/oslo_rootwrap/client.py", line 124, in execute

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent self._ensure_initialized()

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/oslo_rootwrap/client.py", line 109, in _ensure_initialized

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent self._initialize()

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/oslo_rootwrap/client.py", line 79, in _initialize

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent (stderr,))

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent Exception: Failed to spawn rootwrap process.

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent stderr:

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent Traceback (most recent call last):

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/bin/neutron-rootwrap-daemon", line 10, in <module>

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent sys.exit(daemon())

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/oslo_rootwrap/cmd.py", line 57, in daemon

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent return main(run_daemon=True)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/oslo_rootwrap/cmd.py", line 98, in main

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent daemon_mod.daemon_start(config, filters)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/oslo_rootwrap/daemon.py", line 98, in daemon_start

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent server = manager.get_server()

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib64/python2.7/multiprocessing/managers.py", line 493, in get_server

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent self._authkey, self._serializer)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib64/python2.7/multiprocessing/managers.py", line 162, in __init__

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent self.listener = Listener(address=address, backlog=16)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib/python2.7/site-packages/oslo_rootwrap/jsonrpc.py", line 66, in __init__

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent self._socket.bind(address)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent File "/usr/lib64/python2.7/socket.py", line 224, in meth

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent return getattr(self._sock,name)(*args)

2017-09-03 17:46:21.400 1470 ERROR neutron.agent.dhcp.agent socket.error: [Errno 13] Permission denied#我擦果然还是有点小问题,找一找吧。当然不光dhcp服务其他的日志也刷Failed to spawn rootwrap process.之类的。

# cat /etc/sudoers.d/neutron #sudo权限也设置了啊,不是没权限啊。

Defaults:neutron !requiretty neutron ALL = (root) NOPASSWD: /usr/bin/neutron-rootwrap /etc/neutron/rootwrap.conf * neutron ALL = (root) NOPASSWD: /usr/bin/neutron-rootwrap-daemon /etc/neutron/rootwrap.conf

#上面的设置,在/etc/neutron/rootwrap.conf文件中定义了filters_path参数指明哪个目录下列举的命令需要以root执行

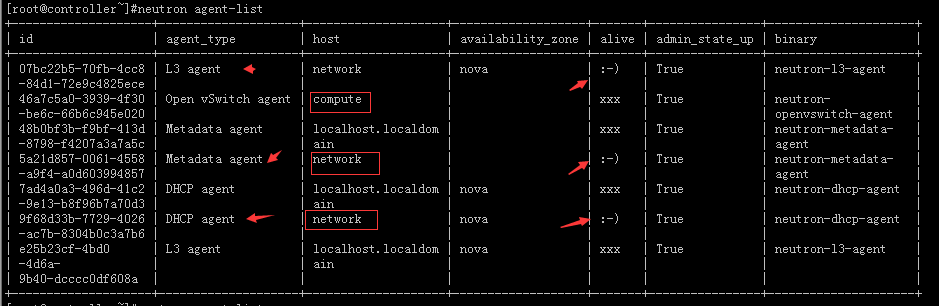

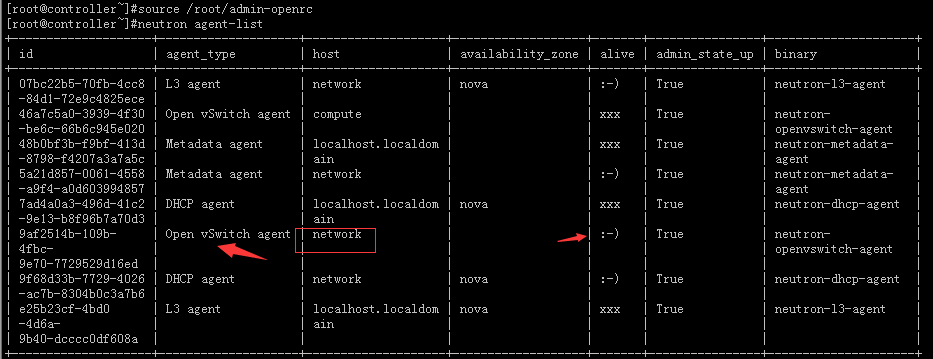

#neutron agent-list #先来查看下agent列表吧

#发现network节点只有三个服务是正常的,还有一个Open vSwitch agent没有出现。

#那么就来到网络节点查看下服务状态吧。

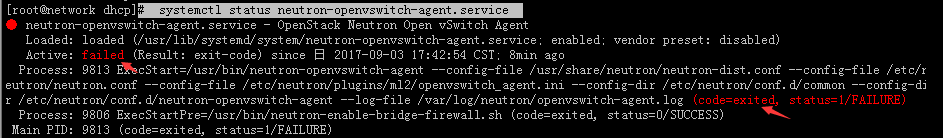

# systemctl status neutron-openvswitch-agent.service

#可见此服务没有启用。初步判断可能是此服务没启动,导致了一系列的问题,而并非是因为什么权限问题设置问题。

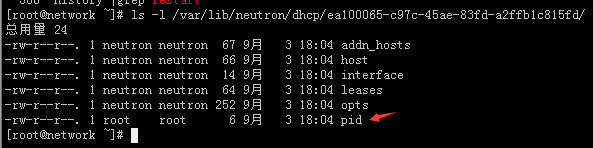

# ls -l /var/lib/neutron/dhcp/ea100065-c97c-45ae-83fd-a2ffb1c815fd/ #然后下面是空空如也,因为上面日志已经报错了不能加载嘛。

#我先试着将Open vSwitch agent服务起来吧,又滤了一遍配置和过程没差啊,后来说看看selinux关了没有,我日测试搞了新机器这台机器的selinux没有关闭,然后再试着: systemctl start neutron-openvswitch-agent.service ,结果启动起来了。

#systemctl restart neutron-openvswitch-agent.service neutron-l3-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service #保险起见我就把网络节点上面的其他服务也启动了一下。

#然后再执行# tail -f /var/log/neutron/*.log|grep ERR #观察了一会这个报错了。

#可以看到产生了一堆文件和一个root权限的pid文件。

#然后再控制节点查看一下:

#从结果可以看到服务也启动起来了。

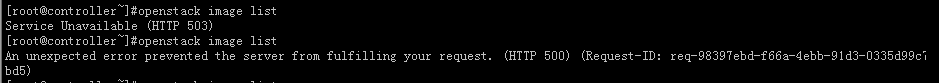

9.2 又比如:

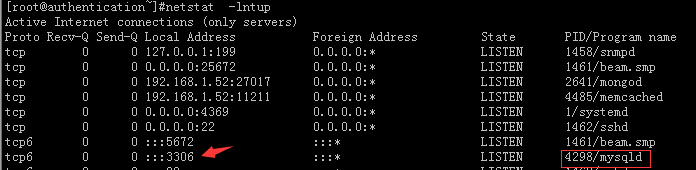

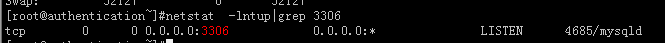

#打算用demo创建一个实例测试一下,我擦一票检索不到,这显然是控制节点跟mysql的连接出现了问题。

#openstack image list #检索一下镜像也检索不出来了

#查看下mysql的启动状态:

#从图上看是监听在了tcp6上面,却没有监听在tcp上面。

#在控制节点服务器也就是(192.168.1.52上面更改一下配置文件):

#vim /etc/my.cnf.d/mariadb-server.cnf

[mysqld] bind-address=0.0.0.0 #加上这句

#service mariadb restart #重启下mariadb服务

#然后再来查看一下:

#当然与mysql的连接状况也直接影响了你的各种查询结果。

#当然到这里还不算完还应该将mysql的默认连接数调大:

#cat /etc/my.cnf

[mysqld] max_connections=10000

#来防止控制节点报:

sqlalchemy.exc.OperationalError: (pymysql.err.OperationalError) (1040, u'Too many connections')

#当时上面的方法对Centos7的mariadb服务是不生效的,重启mariadb服务之后登陆mysql查看发现还是没有变化:

MariaDB [(none)]> select VARIABLE_VALUE from information_schema.GLOBAL_VARIABLES where VARIABLE_NAME='MAX_CONNECTIONS'; +----------------+ | VARIABLE_VALUE | +----------------+ | 214 | +----------------+ #临时解决办法: MariaDB [(none)]> set global max_connections = 16384;

#下面是永久生效的方法:

# vi /usr/lib/systemd/system/mariadb.service

[Service] Type=notify User=mysql Group=mysql LimitNOFILE=65535 #加上这句话 LimitNPROC=65535 #加上这句话

# vim /etc/my.cnf.d/mariadb-server.cnf

[mysqld] max_connections=16384

# systemctl daemon-reload

# service mariadb restart

# cat /proc/$(cat /var/run/mariadb/mariadb.pid)/limits #用这句话可以查看文件描述法调大是否生效。

#这里用两个个小错误就是想说不是界面可以登录或者可以进行一些操作就是部署结束了,因为这只是开始要多看日志看看有什么错误。

#如果创建子网遇到下面的错误:

错误:创建网络"LAN01_172.15.0.0"失败:Unable to create the network. No tenant network is available for allocation. Neutron server returns request_ids: ['req-311eb217-2507-445b-b178-c8a093ca0304']

#出现上面的原因是因为未配置隧道ID范围,[ml2_type_vxlan]这里看看是不是没有配置,因为我选择的是vxlan网络模式。

#创建了网络创建了三台简单的实例而且可以通过控制台操作,至此一个openstack的小集群的简单部署就完毕了。